AI Governance in Life Sciences: From Principles to Execution

Explore AI governance in life sciences and move from principles to execution with traceability, validation, compliance, and audit-ready GxP workflows.

share this

1.0. Introduction: From Principles to Continuous Governance

AI governance in life sciences is no longer a future concern; it is a current regulatory expectation.

In previous articles, we unpacked core AI governance principles in GxP environments and explored how organizations can operationalize them. As AI adoption accelerates across clinical, safety, and manufacturing functions, a new challenge emerges.

Regulators are active. Agencies like the FDA and EMA shape AI governance, emphasizing risk-based oversight, lifecycle management, and traceability. The implication is clear: organizations can no longer treat governance as a one-time framework or documentation exercise. It must be continuous, embedded, and inspection-ready by design.

This third installment focuses on aligning with evolving expectations, moving from isolated governance efforts to enterprise-scale, regulator-aligned AI governance.

Today, the question is no longer whether you govern AI, but how effectively and continuously you can prove it.

2.0. Why AI Governance is Mission-Critical in Life Sciences

AI is no longer experimental in pharma; it is embedded across clinical development, pharmacovigilance, manufacturing, and regulatory operations. This reliance invites increased scrutiny.

AI governance is fundamental to:

- Patient Safety: Ensuring AI-driven decisions avoid unintended risks

- Regulatory Compliance: Aligning with GxP expectations and evolving AI-specific guidance

- Trust & Transparency: Building confidence among regulators, stakeholders, and patients

Without robust governance, AI systems risk becoming opaque, untraceable, and non-compliant—delaying approvals or triggering regulatory action.

3.0. Global Regulatory Landscape: FDA & EMA Expectations

The FDA and EMA actively shape frameworks guiding responsible AI use in regulated environments. Their approaches differ slightly but converge on key themes:

1. Risk-Based Approach to AI

Regulators stress that AI governance should match system impact:

- High-risk systems (e.g., clinical decision support) require rigorous validation and oversight

- Lower-risk applications may follow lighter controls but still require accountability

This aligns with traditional GxP thinking: risk dictates control rigor.

2. Lifecycle-Based Oversight

AI is not “validated once and done”. Regulators expect:

- Continuous monitoring to ensure smooth operation and quick issue detection.

- Periodic revalidation to confirm models remain accurate and adapt to changes.

- Change management for updates to reduce risk and communicate with stakeholders.

3. Transparency & Explainability

Organizations must be able to:

- Explain how AI models make decisions, detailing processes and influencing factors.

- Demonstrate reproducibility by showing consistent results under the same conditions.

- Provide clear audit trails documenting decision-making for review and accountability.

4. Data Integrity & Traceability

Borrowing from ALCOA+ principles, regulators expect:

- Complete lineage of training data recording origin, transformations, and processing.

- Version control of models to track changes and enable rollbacks.

- Full traceability from input data through processing to outputs for transparency and accountability.

4.0. Documentation & Traceability: The Backbone of Compliance

Documentation is one of the most underestimated aspects of AI governance.

To meet FDA and EMA expectations, organizations must maintain:

- Model Documentation: Architecture, training data sources, assumptions

- Validation Records: Test cases, performance metrics, acceptance criteria

- Change Logs: Version updates, retraining events, parameter changes

- Audit Trails: Who did what, when, and why

This traceability is essential for compliance and inspection readiness.

5.0. From Principles to Practice: A Governance Framework for AI

To operationalize compliance, organizations need a governance model defining:

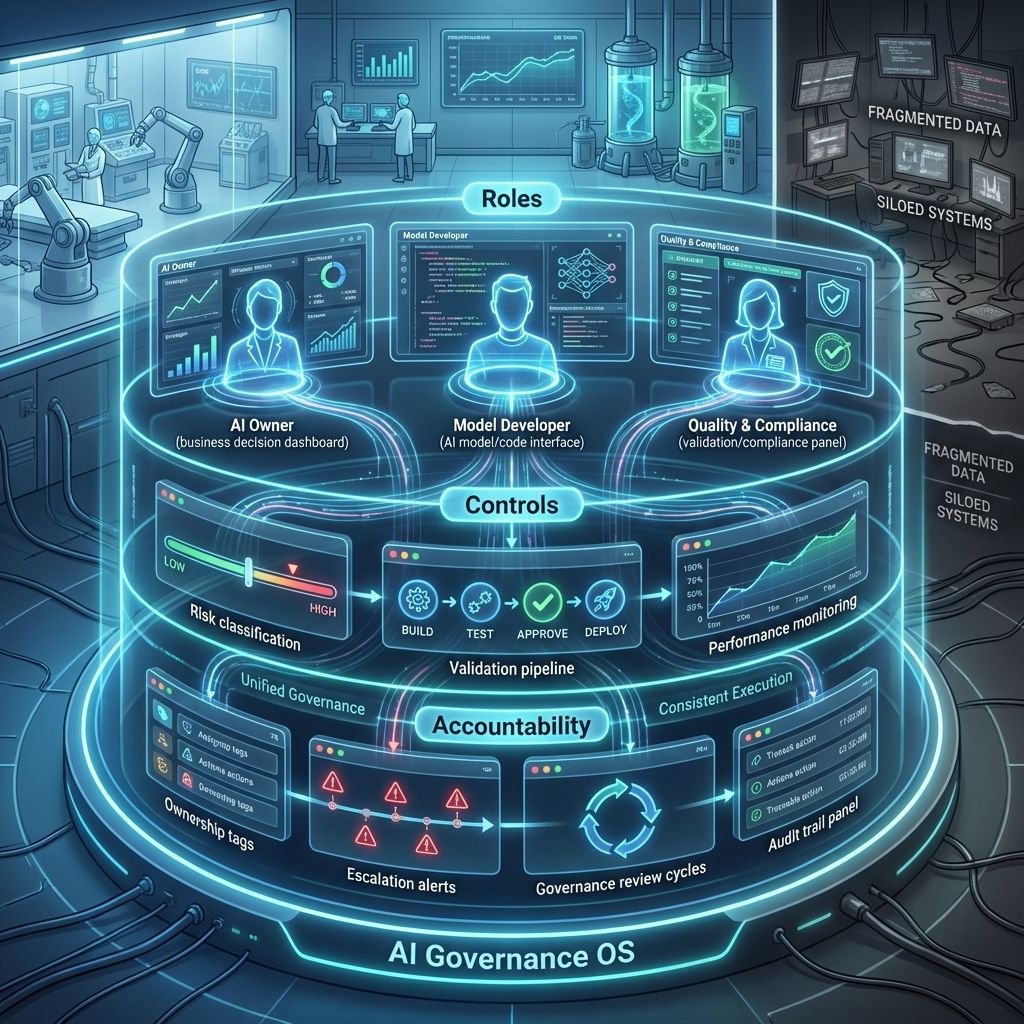

1. Roles & Responsibilities

- AI Owners: Accountable for business outcomes and responsible AI use.

- Model Developers: Design, build, and maintain AI models.

- Quality & Compliance Teams: Ensure validation, oversight, and regulatory compliance.

2. Controls & Processes

- Risk Classification: Categorize AI systems by impact and criticality.

- Validation Protocols: Validate models according to intended use.

- Performance Monitoring: Continuously track and assess model performance.

3. Accountability Mechanisms

- Ownership: Define clear responsibility for AI-driven decisions.

- Escalation: Establish pathways to address model failures quickly.

- Governance Reviews: Conduct periodic reviews to ensure ongoing compliance.

Many organizations struggle not with defining principles, but with consistent implementation across systems.

6.0. xLM’s Continuous Intelligent Validation (cIV): Embedding Governance and Enabling Scalable AI Compliance

xLM's Continuous Intelligent Validation (cIV) bridges regulatory expectations and real-world implementation by embedding AI governance within regulated environments.

With cIV, organizations can:

- Embed governance controls into AI workflows.

- Ensure end-to-end traceability across models, data, and decisions.

- Maintain inspection-ready documentation automatically.

- Align AI operations with FDA and EMA expectations.

Beyond governance, cIV tackles a major barrier to AI adoption in pharma: validation.

Traditional validation falls short for modern AI systems that:

- Continuously learn and adapt to new data and environments.

- Require frequent updates to maintain performance.

- Exhibit complex, data-driven behaviors.

cIV enables continuous validation, ensuring AI systems remain compliant, reliable, and audit-ready throughout their lifecycle, making compliance a built-in feature rather than an afterthought.

7.0. How xLM’s cIV Supports FDA & EMA-Aligned Validation

cIV enables organizations to validate AI systems aligned with regulatory expectations:

1. Risk-Based Validation Framework

- Adjusts validation strictness based on system impact, applying testing proportional to operational effect.

- Ensures compliance fits needs without overloading teams, balancing requirements and workload.

2. Continuous Validation & Monitoring

- Automatically revalidates models when data or systems change, maintaining accuracy without manual effort.

- Monitors performance drift and alerts on major deviations, ensuring reliability and prompt fixes.

3. Full Traceability

- Links data to model, validation, and output, creating a seamless information flow.

- Maintains detailed audit trails for all inspections, ensuring traceability and accountability.

4. Automated Documentation

- Generates validation reports aligned with regulatory standards, supporting audits.

- Reduces manual effort while improving consistency, streamlining workflows and minimizing errors.

5. Change Management

- Tracks model updates and retraining cycles to maintain accuracy and performance.

- Ensures every change is assessed, documented, and approved before implementation.

8.0. Closing Insights: From Compliance Burden to Competitive Advantage

AI regulation in life sciences evolves rapidly. Organizations that invest in governance will:

- Accelerate regulatory approvals

- Reduce compliance risks

- Build long-term trust with regulators

AI governance is not a constraint; it enables scalable, trustworthy innovation.

By aligning with FDA and EMA expectations, adopting risk-based governance, and leveraging platforms like xLM’s cIV, life sciences organizations can turn compliance from a burden into a strategic advantage.

In this new era of AI-driven pharma, trust is the currency and governance is how you earn it.

9.0. Related Posts

share this