Engineering Trust in AI: GxP Validation & FDA Framework

Explore FDA AI credibility framework in GxP. Learn how validation, traceability, and continuous monitoring ensure compliant, scalable and audit-ready AI systems

share this

1.0. Introduction: AI Enters the Credibility Era

In the first three editions, we explored how AI in life sciences evolves from regulatory intent to operational reality. We began with guiding principles shaped by regulators, addressed practical challenges applying those principles in GxP environments, and examined how execution systems embed governance into workflows.

Now, with the latest draft guidance from the U.S. FDA, the conversation has matured. The introduction of a structured credibility assessment framework signals a decisive shift: AI is no longer evaluated by capability alone. It must be validated, traceable, and defensible within a clearly defined Context of Use (COU).

This marks a new phase where AI systems must function effectively and withstand regulatory scrutiny with complete, consistent, and continuously maintained evidence.

2.0. From Governance to Credibility: Completing the Regulatory Arc

Across the first three newsletters, a clear trajectory emerged. Regulatory bodies established that AI must be governed with the same rigor as any GxP system. Organizations embedded AI into workflows, generated validation documents, automated processes, and accelerated decision-making. Finally, execution platforms became essential to manage AI-driven workflows compliantly and audibly.

The FDA framework builds on this foundation and introduces a stricter expectation: governance must result in demonstrable credibility. It is no longer enough to define policies or implement controls. Organizations must show, with evidence, that an AI model is reliable for a specific purpose, under specific conditions, and over time.

Many current approaches fall short. Governance frameworks often exist at a policy level, while execution systems focus on workflow efficiency. What is missing is a unified capability connecting intent, execution, and evidence into a continuous validation lifecycle.

3.0. The FDA Credibility Framework as a Validation Lifecycle

The FDA’s seven-step framework is a modern validation lifecycle for AI models. It begins by defining the question of interest and the model's operating context, then covers risk assessment, validation planning, execution, documentation, and final evaluation.

This lifecycle differs from traditional validation by its dynamic and context-sensitive nature. The Context of Use anchors all validation activities. Any change in COU related to data, process conditions, or deployment environment can invalidate prior evidence and require reassessment.

This marks a fundamental shift. Validation is no longer a one-time event tied to system release. It becomes a living process, evolving continuously with the model and its environment.

4.0. Why Traditional Validation Approaches Fall Short

Many organizations still use static validation methods. Documentation is generated at milestones, reviews are periodic, and systems are validated against predefined requirements. While effective for deterministic systems, this approach struggles with AI's adaptive, data-driven nature.

AI-generated outputs are regulated content. Validation must extend beyond the system to include its outputs. Traceability, reproducibility, and accountability must be maintained not only at the system level but across every AI-generated artifact.

The third newsletter emphasized embedding governance into workflows. However, without a structured validation layer continuously linking outputs to their models, data, and context, execution alone cannot ensure credibility.

This gap must now be addressed.

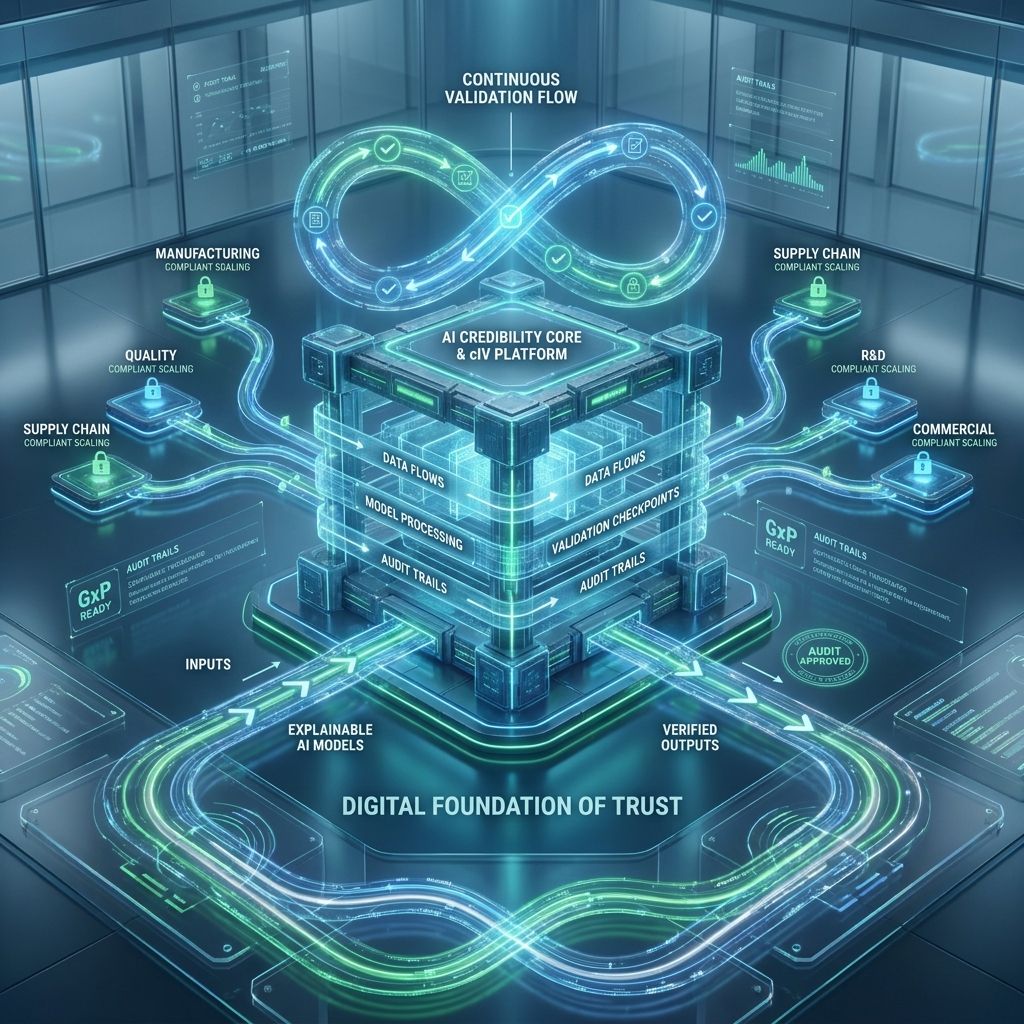

5.0. cIV: Redefining Validation as a Continuous System

cIV (Continuous Intelligence Validation) turns validation into a continuous, integrated process. It aligns with the FDA’s framework as an operational system enforcing validation throughout the AI lifecycle.

In cIV, model validation starts with a clear definition of the question of interest, guiding all validation. The model’s intended use is specified to ensure purpose-driven validation aligned with regulations.

The Context of Use is captured in a structured, version-controlled way, detailing data sources, conditions, environments, and users. Treating COU as dynamic lets cIV track changes and link them to validation needs.

Risk assessment in cIV is ongoing, adjusting validation based on the model’s impact and error consequences. This ensures high-risk models receive proper regulatory scrutiny.

Validation planning is automated, generating strategies from use case, context, and risk. Plans cover metrics, tests, and acceptance criteria aligned with regulations.

Testing is built into the platform. Models are benchmarked, stress-tested, and checked for robustness, with real-time results forming continuous validation evidence.

6.0. Validation of AI Outputs: Extending Beyond the Model

A key advancement by cIV is extending validation beyond the model to the outputs it generates. AI-generated documents like validation plans, test scripts, and reports meet the same regulatory standards as traditional content.

cIV ensures every output is:

- Linked to the model version that generated it

- Traceable to the data used

- Associated with a specific Context of Use

- Subject to review and approval workflows

This creates a closed-loop system where outputs are generated efficiently and are inherently compliant and auditable.

7.0. Continuous Credibility: Monitoring, Drift, and Revalidation

A major challenge in AI validation is maintaining credibility over time. This difficulty arises because models tend to drift as data distributions change, processes evolve, or external conditions shift gradually or suddenly. Traditional validation methods, which rely on periodic reviews and assessments, are insufficient to address these ongoing changes effectively.

cIV introduces continuous monitoring as a core capability to overcome these challenges. With this approach, model performance is tracked in real time, allowing deviations from expected behavior to be detected early. When performance thresholds are exceeded, revalidation workflows are triggered automatically. This proactive system ensures that credibility is not only established initially but also sustained consistently throughout the entire lifecycle of the model.

8.0. The Strategic Advantage of System-Driven Credibility

The FDA’s framework makes clear that credibility is the key factor that defines regulatory success. Organizations that demonstrate robust and continuous validation processes will be in a much better position to gain regulatory approval. Moreover, such organizations can more effectively scale AI technologies across their operations while maintaining compliance consistently over time.

Continuous validation, or cIV, enables this by embedding validation directly into AI systems. This approach replaces fragmented and disjointed processes, as well as manual coordination efforts, with a unified platform. Such a platform ensures consistency, traceability, and readiness for audits throughout the AI lifecycle.

This shift is not just about meeting compliance requirements; it also creates a strong foundation for scalable and trustworthy AI implementations. By adopting continuous validation, organizations can build AI systems that are reliable, transparent, and capable of evolving securely over time.

9.0. Final Thoughts: Engineering Trust

The journey from principles to practice to execution has reached a new milestone that is credibility. This milestone marks a significant evolution in how AI is perceived and used across industries.

Credibility fundamentally transforms AI from being merely a promising capability into becoming a trusted and reliable component within regulated systems. It empowers organizations to move confidently beyond the experimental phase and seamlessly integrate AI into critical decision-making processes that impact business outcomes and compliance requirements.

With cIV, credibility is no longer treated as an afterthought or a simple documentation exercise. Instead, it is intentionally engineered and embedded into every stage of the AI lifecycle. This approach ensures that AI systems are not only effective and defensible but also fully compliant with existing standards and prepared to meet future regulatory demands.

10.0. About the Authors

Nagesh Nama

CEO, xLM Continuous Intelligence | Founder, ValiMation

Nagesh is a pioneer in AI/ML-driven GxP compliance with nearly three decades of experience helping pharmaceutical, biotech, and medical device companies navigate validation, data integrity, and regulatory compliance. He is the founder and CEO of both ValiMation (founded 1996) and xLM Continuous Intelligence — the company that first introduced a Continuous Validation platform supporting IaaS/PaaS/SaaS environments compliant with 21 CFR Part 11 and Annex 11. Today, xLM offers a comprehensive suite of continuously validated AI/ML managed services spanning intelligent validation (cIV), predictive maintenance, temperature mapping, and GxP AI agents. Nagesh is a member of the Forbes Technology Council and the Fast Company Executive Board, a contributor to Forbes and Fast Company, and has been featured on Microsoft's AI Agents Vlog. He holds an M.S. in Manufacturing Engineering from the University of Massachusetts, Amherst.

Kashyap Joshi

Program Manager, AI/ML ContinuousOS Apps | xLM Continuous Intelligence

Kashyap Joshi is a Program Manager at xLM, where he leads the implementation of complex AI systems for life sciences organizations by aligning stringent GxP regulatory requirements with next‑generation technology and xLM’s ContinuousOS Suite of Apps to deliver measurable ROI, continuous compliance, and long‑term transformation for clients across pharma, biotech, and medical devices.

11.0. Related Posts

share this